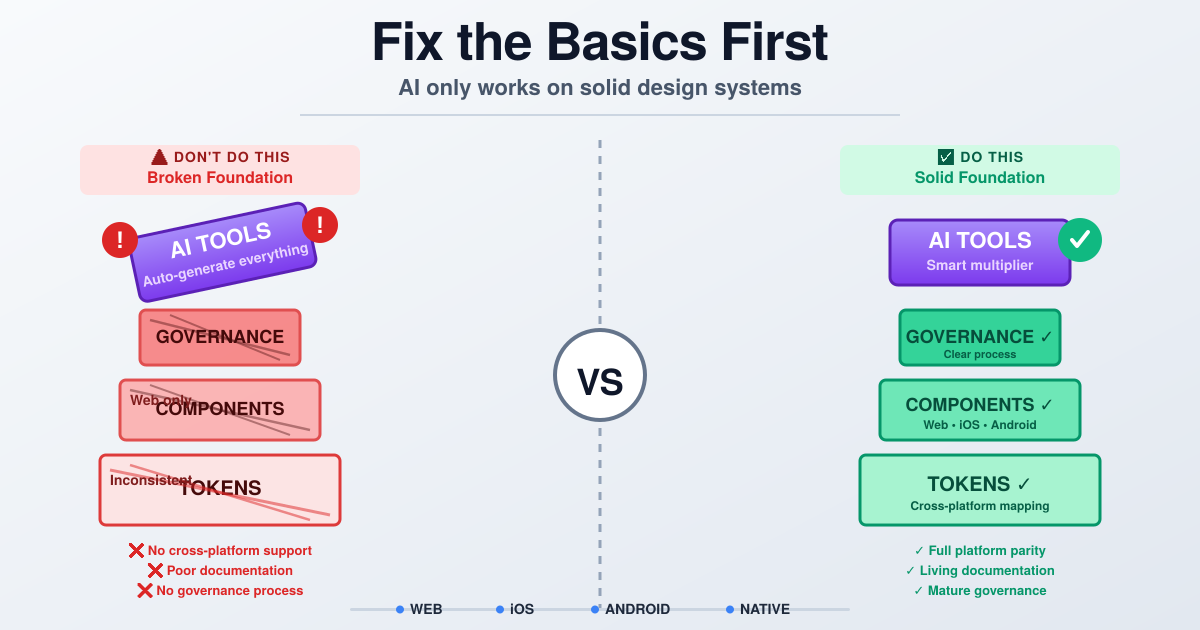

Don’t Sell AI for Design Systems — Fix the Basics First

Everyone’s talking about AI + design systems like it’s the next growth hack.

Conferences fill with MCP server demos, Figma Make integrations, token automation pitches and consultants promising to “10x your velocity with agentic workflows.”

If your company is already running at that level — congratulations. You’re in the tiny, enviable minority.

But here’s the ugly truth: the vast majority of companies are not ready for AI to touch their design systems. 77% of organizations rate their data as average, poor, or very poor for AI readiness, and 53% have no immediate plans to integrate AI into design systems due to privacy concerns, lack of expertise, and budget constraints.

They don’t need a flashy automation layer. They need core systems, discipline, and cross-platform thinking. And before you pitch AI consulting, you need to understand why.

The real state of play: most teams are ten years behind

I hear the same pattern over and over.

- A company “adopts Figma” 2–3 years ago and treats that as their design system.

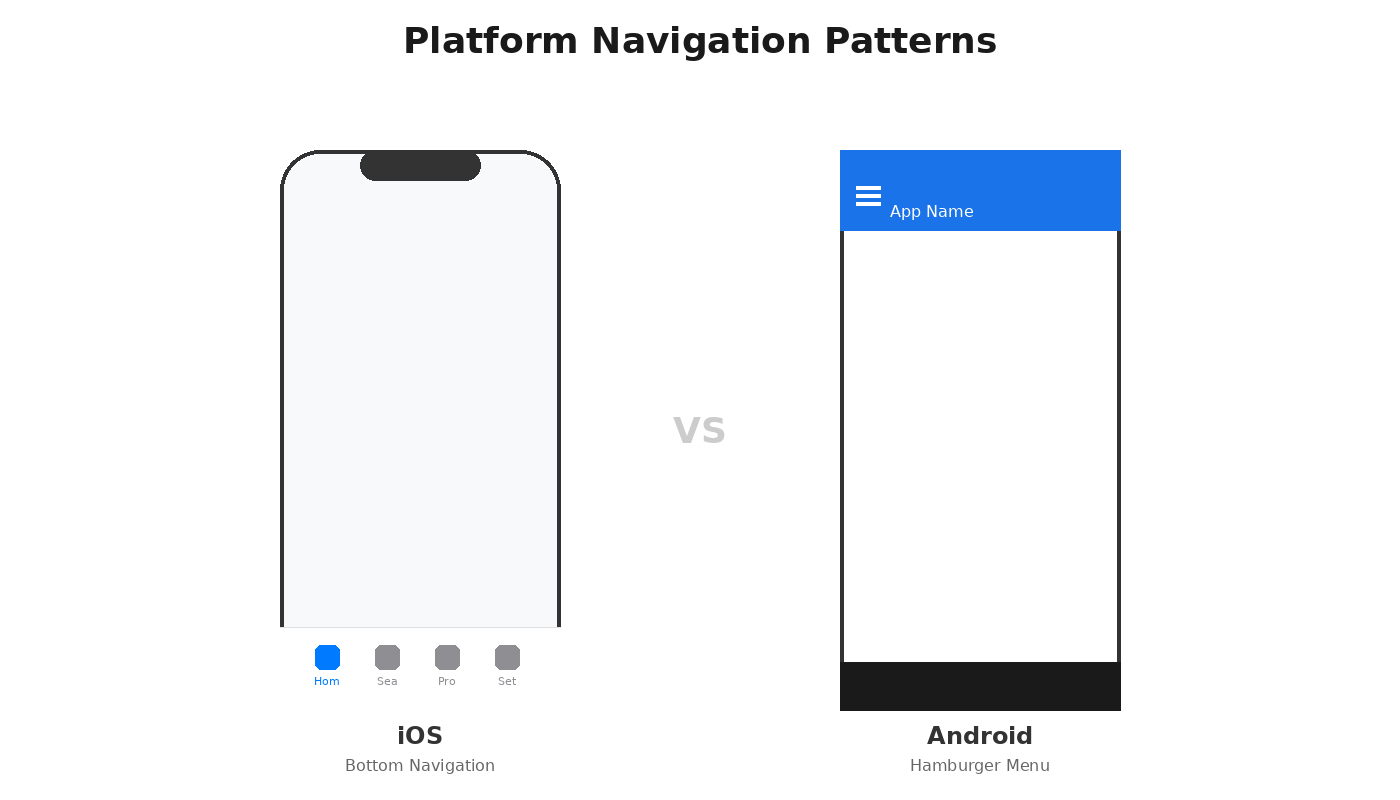

- They build components for one platform (usually web) and call it a day.

- Native platforms — iOS and Android — are afterthoughts, or unsupported.

- Tokens are misunderstood or used inconsistently. There’s no plan for platform mapping, themes, or runtime usage.

- Governance is ad hoc. Contribution, review and release cadence are chaotic.

- Leadership expects automations and AI to instantly close all these gaps.

That expectation is naive. You can’t bolt AI onto a house with no foundation and expect it to hold.

Why AI isn’t the immediate answer

AI is powerful — increasingly so — but it’s not a magic replacement for design system discipline. Here’s what many teams fail to appreciate:

- AI amplifies what you feed it. 95% of organizations faced data challenges during AI implementation. If your tokens are inconsistent, components poorly documented, or variant logic unstandardized, the AI will learn and amplify those mistakes.

- Tools like LLMs make mistakes. They hallucinate, misapply semantics, and confuse edge cases. AI systems reflect the limitations and biases in their training data, and over-reliance without scrutiny risks perpetuating these biases and creating disconnected user experiences.

- Native platform parity is hard. Mapping tokens and components across web, iOS, and Android requires deliberate decisions about spacing, type, elevation, native patterns — not an automated template. AI trained on web patterns will confidently violate HIG or Material Design.

- Organizational readiness matters more than technology. People, processes, ownership, and governance matter. AI can accelerate a mature process — it won’t create one.

Who should buy AI services — and who shouldn’t

If you’re an organization with:

- well-established cross-platform tokens,

- rigorous contribution and review workflows, and

- clear engineering ownership for token runtime

— then AI can multiply your velocity. That’s where automation, code generation, and assistive tools pay off.

If you’re anywhere earlier than that, your first investments should be:

- platform-agnostic tokens and mapping strategies,

- standards for responsive components and accessibility,

- governance: contribution process, review gates, release cadence, and ownership,

- documentation: living docs that aren’t just Figma files.

Only when those are stable should you bring AI into the room.

Practical roadmap: where to focus before AI

- Standardize tokens and platform mapping. Define what a color token means on web vs iOS vs Android. Document mapping rules.

- Ship native support. Ensure your core components exist and are implemented across platforms with parity guidelines (not 1:1 clones — platform-appropriate variants).

- Institutionalize governance. Who reviews tokens? Who approves a breaking change? What’s the release cadence?

- Make documentation executable. Living examples, code snippets, token exports, and runtime references.

- Measure — then automate. Track adoption, implementation gaps, and error rates. Use those metrics to justify automation or AI experiments.

What’s actually safe to use right now

If you’ve done the basics, here’s what works:

Documentation automation: Claude Skills can package your design system knowledge — naming conventions, usage guidelines, component structure — into reusable contexts that generate consistent documentation as your system evolves. 63% of teams are using AI for documentation generation because it’s low-risk, high-value.

Context-aware code generation: Figma’s MCP server provides AI tools with references to specific variables, components, and styles from your actual design system, making generated code more precise and reducing errors.

This isn’t blind generation — it’s translation with guardrails. The new Code Connect UI offers AI-powered component mapping suggestions based on your actual source files.

Token auditing: Figma’s “Check designs” linter recommends the correct variable based on context, catching “designer used #FF0000 instead of error-red token" before handoff. AI excels at repetitive validation.

What’s still risky

Unsupervised component generation: AI-generated components might have good technical quality but lack cultural specificity, emotional understanding, or platform appropriateness. Don’t ship AI-generated components without designer + engineer review.

Cross-platform assumptions: AI doesn’t inherently understand that iOS buttons need 44pt tap targets while Android uses 48dp. It will confidently give you the wrong answer.

Replacing human judgment: ServiceNow, SAP Fiori, and other enterprise design systems emphasize using AI only when it genuinely solves problems better than traditional methods, with mandatory human oversight.

How to approach AI responsibly

If you’re ready to experiment:

- Start in a sandbox. Use replicas of your system data, not production.

- Validate outputs. Always pair AI suggestions with human review — designers + platform engineers.

- Track mistakes. Log hallucinations, mapping errors, and edge cases. Use them to refine prompts and datasets.

- Invest in explainability. Your deployment pipeline needs automated checkpoints where generated code or tokens are validated against rules before they reach production.

- Be honest with clients. If you’re selling AI services, set clear expectations: it will assist, not replace, and it requires mature inputs.

Final note to would-be AI consultants

Yes, some people have a leg up today. You probably know more than many in-house teams. But being “better informed” does not make you an expert. The space is nascent. Tools misbehave. Business risk is real.

At Schema 2025, Figma emphasized that design systems are evolving into living systems that serve as the translation layer AI needs — but that only works if the system itself is solid first. The tools are real: Figma MCP is shipping, Claude Skills are available, Make Kits are in early access. But they’re multipliers, not foundations.

Do your homework. Test tools. Build repeatable, safe processes. Start by helping organizations do the basics right — tokens, native parity, governance — and only then introduce AI to scale what actually works.

If you do this, you won’t be selling vaporware; you’ll be building durable systems that let AI create real value.

Comments ()